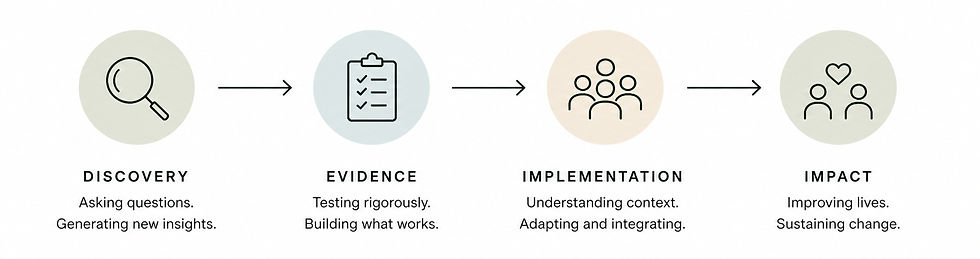

Implementation Science: From Discovery to Impact

- Melissa Boswell

- May 1

- 5 min read

Updated: 5 days ago

I’ve always been drawn to science because of discovery. Understanding how the body works, asking better questions, and finding patterns that weren’t obvious before.

But over time, I started to question: What is discovery without impact?

You can build something that works. You can validate it. You can publish it. But if it never actually reaches people in a meaningful way, something is incomplete.

A few weeks ago, I took a course through Wake Forest Executive Education on Implementation in a Learning Health System. I didn’t fully realize before that implementation science is an entire field of study, but sitting in that room, it felt like something I had been circling for a while finally had a name.

It is also a much bigger field than I expected. There are well over 100 frameworks, each offering a different way to think about how evidence-based solutions move into the real world.

Some of the most widely used include CFIR (the Consolidated Framework for Implementation Research) and EPIS (Exploration, Preparation, Implementation, Sustainment), which help you understand context and guide implementation. Others, like RE-AIM and PRISM, are often used to evaluate impact and outcomes once something is in practice.

At first, all of the frameworks and terms felt overwhelming. But over time, I realized the goal is not to use all of them. It is about understanding enough to see the problem more clearly and to pull out what is useful.

A few ideas stuck with me and have changed how I think about this work.

One of the simplest ideas from the course turned out to be one of the most important.

There is a difference between effectiveness and implementation.

Effectiveness asks whether something works. Implementation asks how you get people to actually use it.

Those are completely different problems.

In research, we spend years answering the first question. In the real world, the second question is often what matters. Implementation science focuses on what happens after something is proven to work, examining how individuals, teams, and systems adopt and sustain it over time.

It also accepts something that traditional science often tries to control for.

The real world is messy.

People are different. Settings are different. What works in one place may not work in another. What works for one person may not work for the next. Instead of trying to eliminate that variability, implementation science works within it.

Complexity is not a problem; it is the nature of doing real-world work.

Another idea that stayed with me is the concept of determinants. The barriers and facilitators that make it harder or easier for something to actually be used.

Time, cost, workflow, incentives, beliefs, and leadership support.

Instead of asking why something is not working, you start asking what is getting in the way. And then an important question: which of those things can we actually change?

That reframing feels simple, but it shifts where to focus your effort, especially when the options can feel endless.

Something else that became clearer is how many layers are involved in implementation.

There is the individual level, patients and providers, and their motivation, capacity, and beliefs. There is the team or clinic level, workflows, communication, and culture. Then there is the organizational level, leadership priorities, financial models, and incentives.

Each level requires different approaches. What motivates a patient is not what motivates a health system executive. What makes something easy for a provider is not necessarily what makes it scalable for an organization.

This helped me better understand something I’ve been experiencing. Direct-to-consumer and healthcare system implementation are fundamentally different problems. They require different strategies, different messaging, and different measures of success.

One idea I’m still thinking about is sustainability. Not as something that happens after implementation, but something you have to consider from the beginning. Can this be delivered consistently over time? Will people keep using it? Will it still fit as the system evolves? Will it continue to create value?

It is easy to focus on launching something. It is much harder to imagine what it will look like one year or five years from now.

This course did not give me all the answers (that would be boring anyway). It did, however, give me a new lens.

I now want to approach scientific developments more intentionally, thinking about where something will live, who needs to be involved, what might get in the way, and what it would actually take for something to last. I also see implementation as its own discipline now, not just something that happens after the real work is done.

Rethink Health came out of research. We developed an evidence-based program to help people with osteoarthritis move more and feel more confident in their bodies.

At the beginning, my mindset was simple. Build something that works, then share it. What I did not fully understand at the time was how many layers exist between those two steps.

Getting something into people's hands is not just about distribution or partnerships. It is a system, and that system is full of people with different motivations, workflows already overloaded, incentives that may or may not align, and real constraints on time, cost, and attention.

I found myself asking questions without clear frameworks. Where should this live? A clinic? Direct to consumer? Both? Who needs to be involved for this to actually be used? What would make a clinician recommend this to a patient? What would make a patient follow through?

I haven't been figuring this out in isolation. I’ve had thoughtful advisors who have helped shape how I think about the business, the product, and the strategy. At the same time, they are not always the people delivering the program or experiencing it day to day, which creates a gap of its own. So a lot of this has been a mix of intuition, input from others, and real-world testing to see what actually happens.

And I’m still in that process.

Taking this course didn’t resolve those questions. If anything, it made me realize how much more there is to consider, and how many of these challenges are inherent to doing this kind of work. Even if I had been thinking about implementation from the very beginning, I would still be navigating tradeoffs, constraints, and unknowns now.

What has changed is that I have better ways to see the problem(s).

A lot of people are building things that could help others. Programs, tools, research, ideas. But there is often a gap between building something valuable and actually getting it used.

Implementation science sits in that gap.

For me, this experience made it clear that the work is not done when something works.

In many ways, that is just the beginning.

If you are looking for tools on implementation science, I would highly recommend the course. Other great resources can be found at The University of Washington Implementation Science Resource Hub and The Wake Forest CTSI Implementation Science.

Comments